HUMAN-IN-THE-LOOP REQUIREMENTS AGREEMENT

AGREEMENT DATE: [DATE]

AGREEMENT NUMBER: [HITL-AGREEMENT-NUMBER]

PARTIES

AI SYSTEM PROVIDER/DEVELOPER ("Provider"):

- Legal Name: [PROVIDER LEGAL NAME]

- Address: [FULL ADDRESS]

- Contact: [NAME, EMAIL, PHONE]

AI SYSTEM DEPLOYER/OPERATOR ("Deployer"):

- Legal Name: [DEPLOYER LEGAL NAME]

- Address: [FULL ADDRESS]

- Contact: [NAME, EMAIL, PHONE]

RECITALS

WHEREAS, Provider has developed or provides an AI system that requires or benefits from human oversight;

WHEREAS, Deployer intends to deploy and operate the AI system and must ensure appropriate human oversight in accordance with applicable regulations and best practices;

WHEREAS, regulations including the EU AI Act (Article 14), Colorado AI Act, and other frameworks require meaningful human oversight of certain AI systems;

WHEREAS, the parties wish to establish clear requirements and responsibilities for human oversight of the AI system;

NOW, THEREFORE, the parties agree as follows:

ARTICLE 1: DEFINITIONS

1.1 "AI System" means the artificial intelligence system identified in Schedule A.

1.2 "Automated Decision" means a decision made or substantially influenced by the AI System without human intervention.

1.3 "Human-in-the-Loop (HITL)" means a human oversight model where a human must review and approve AI System outputs before they are implemented or acted upon.

1.4 "Human-on-the-Loop (HOTL)" means a human oversight model where humans monitor AI System operations and can intervene, but do not approve each individual output.

1.5 "Human-in-Command (HIC)" means a human oversight model where humans maintain strategic decision-making authority while the AI System handles operational aspects.

1.6 "Meaningful Human Control" means human oversight that involves genuine assessment, discretion, and the ability to influence or override AI System outputs.

1.7 "Override" means a human decision to reject, modify, or supersede an AI System output.

1.8 "Qualified Personnel" means individuals who meet the competency requirements specified in this Agreement.

ARTICLE 2: HUMAN OVERSIGHT MODEL

2.1 Oversight Model Selection

The following human oversight model(s) shall apply to the AI System:

☐ Human-in-the-Loop (HITL)

- Human approval required before AI outputs are implemented

- Applicable to: [SPECIFY DECISIONS/OUTPUTS]

☐ Human-on-the-Loop (HOTL)

- Humans monitor operations with ability to intervene

- Applicable to: [SPECIFY DECISIONS/OUTPUTS]

☐ Human-in-Command (HIC)

- Humans maintain strategic authority

- Applicable to: [SPECIFY DECISIONS/OUTPUTS]

☐ Tiered Oversight

- Different oversight levels for different decision types (see Section 2.2)

2.2 Oversight by Decision Type

| Decision Type | Risk Level | Oversight Model | Review Requirement |

|---|---|---|---|

| [TYPE 1] | ☐ High ☐ Med ☐ Low | ☐ HITL ☐ HOTL ☐ HIC | [REQUIREMENT] |

| [TYPE 2] | ☐ High ☐ Med ☐ Low | ☐ HITL ☐ HOTL ☐ HIC | [REQUIREMENT] |

| [TYPE 3] | ☐ High ☐ Med ☐ Low | ☐ HITL ☐ HOTL ☐ HIC | [REQUIREMENT] |

| [TYPE 4] | ☐ High ☐ Med ☐ Low | ☐ HITL ☐ HOTL ☐ HIC | [REQUIREMENT] |

2.3 High-Risk Decision Criteria

The following criteria trigger mandatory human review (HITL):

☐ Decisions affecting employment status

☐ Credit, lending, or financial decisions

☐ Insurance underwriting or claims decisions

☐ Healthcare access or treatment decisions

☐ Educational opportunity decisions

☐ Housing access decisions

☐ Decisions above specified value threshold: $[AMOUNT]

☐ Decisions affecting vulnerable populations

☐ Decisions with potential for significant harm

☐ Low confidence scores below [THRESHOLD]

☐ Anomalous or edge case inputs

☐ Other: [SPECIFY]

ARTICLE 3: PROVIDER RESPONSIBILITIES

3.1 Technical Capabilities

Provider shall ensure the AI System includes the following human oversight capabilities:

☐ Visibility Features:

- Display of AI System outputs before implementation

- Explanation of reasoning and key factors

- Confidence scores or uncertainty indicators

- Flagging of anomalies or edge cases

- Access to underlying data used in decision

☐ Intervention Features:

- Ability to approve, reject, or modify outputs

- Override capability that supersedes AI decisions

- Ability to adjust parameters or thresholds

- Emergency stop or disable functionality

- Ability to exclude individuals or cases from automation

☐ Monitoring Features:

- Real-time monitoring dashboard

- Performance and accuracy metrics

- Bias and fairness indicators

- Alert mechanisms for anomalies

- Audit trail of all decisions and overrides

3.2 Documentation

Provider shall provide:

☐ User documentation for oversight features

☐ Technical documentation of override mechanisms

☐ Training materials for Qualified Personnel

☐ Recommended oversight procedures

☐ Information needed for Deployer's compliance

3.3 System Design

Provider represents that the AI System:

☐ Is designed to support human oversight as described herein

☐ Does not include features that would undermine human oversight

☐ Allows humans to disregard, override, or reverse outputs

☐ Includes appropriate explainability for human understanding

☐ Provides information in a timely manner for human review

3.4 Updates and Changes

Provider shall:

☐ Notify Deployer of changes affecting oversight capabilities

☐ Maintain oversight features through updates

☐ Not remove or diminish oversight capabilities without consent

ARTICLE 4: DEPLOYER RESPONSIBILITIES

4.1 Implementation of Oversight

Deployer shall:

☐ Implement human oversight as specified in this Agreement

☐ Not disable or bypass required oversight mechanisms

☐ Ensure human review for designated decision types

☐ Allocate sufficient resources for effective oversight

☐ Maintain documented oversight procedures

4.2 Qualified Personnel

Deployer shall ensure oversight is performed by Qualified Personnel who:

☐ Have completed required training (see Section 4.3)

☐ Understand the AI System's capabilities and limitations

☐ Have authority to override AI System outputs

☐ Are not subject to undue pressure to accept AI outputs

☐ Have time and resources to perform meaningful review

☐ Meet role-specific qualifications: [SPECIFY]

4.3 Training Requirements

| Training | Content | Frequency | Duration |

|---|---|---|---|

| AI System Overview | [CONTENT] | Initial + Annual | [HOURS] |

| Oversight Procedures | [CONTENT] | Initial + Annual | [HOURS] |

| Bias Recognition | [CONTENT] | Initial + Annual | [HOURS] |

| Domain-Specific | [CONTENT] | As needed | [HOURS] |

4.4 Oversight Procedures

Deployer shall establish and maintain documented procedures for:

☐ Review process for AI outputs

☐ Criteria for approving, modifying, or rejecting outputs

☐ Escalation procedures for complex cases

☐ Override documentation requirements

☐ Handling of disagreements between human and AI

☐ Quality assurance for oversight activities

4.5 Staffing and Resources

Deployer shall maintain:

☐ Sufficient staffing for required oversight volume

☐ Maximum case-to-reviewer ratios: [RATIO]

☐ Maximum review turnaround times: [TIME]

☐ Backup personnel for coverage

☐ Tools and access needed for effective review

4.6 Avoiding Automation Bias

Deployer shall take measures to prevent automation bias:

☐ Training on automation bias risks

☐ Encouragement of independent judgment

☐ Periodic review of override rates

☐ No penalties for appropriate overrides

☐ Rotation of reviewers (if applicable)

ARTICLE 5: OVERRIDE AND INTERVENTION

5.1 Override Authority

Qualified Personnel have authority to:

☐ Reject AI System outputs

☐ Modify AI System outputs before implementation

☐ Approve AI System outputs with additional conditions

☐ Request additional information before decision

☐ Escalate to higher authority

☐ Temporarily suspend AI System operations

5.2 Override Documentation

Each override shall be documented with:

☐ Date and time

☐ Identity of reviewer

☐ Original AI System output

☐ Human decision made

☐ Reason for override

☐ Any escalation or consultation

5.3 Override Protection

☐ Reviewers shall not face adverse consequences for good-faith overrides

☐ Override rates shall not be used punitively

☐ Concerns about AI System performance shall be addressed

5.4 Emergency Intervention

In case of emergency or system malfunction:

☐ Deployer may immediately suspend AI System operations

☐ Provider shall be notified within [TIMEFRAME]

☐ Operations resume only after issue is resolved

☐ Provider shall cooperate with investigation

ARTICLE 6: MONITORING AND REPORTING

6.1 Monitoring Requirements

Deployer shall monitor:

| Metric | Frequency | Threshold | Action if Exceeded |

|---|---|---|---|

| Override rate | [FREQUENCY] | [THRESHOLD] | [ACTION] |

| Accuracy rate | [FREQUENCY] | [THRESHOLD] | [ACTION] |

| Review turnaround time | [FREQUENCY] | [THRESHOLD] | [ACTION] |

| Anomaly frequency | [FREQUENCY] | [THRESHOLD] | [ACTION] |

6.2 Reporting

Deployer shall provide reports to:

| Recipient | Content | Frequency |

|---|---|---|

| Provider | [CONTENT] | [FREQUENCY] |

| Internal stakeholders | [CONTENT] | [FREQUENCY] |

| Regulators (if required) | [CONTENT] | [FREQUENCY] |

6.3 Audit Trail

Maintain audit trail including:

☐ All AI System outputs

☐ All human decisions

☐ All overrides with reasons

☐ Timestamps and reviewer identification

☐ Escalations and resolutions

Retention period: [PERIOD]

6.4 Audit Rights

☐ Provider may audit Deployer's oversight implementation

☐ Deployer may audit Provider's oversight capabilities

☐ Regulators may audit as required by law

ARTICLE 7: COMPLIANCE

7.1 EU AI Act Compliance

If the AI System is subject to the EU AI Act:

Provider shall (per Article 14):

☐ Design system for effective human oversight

☐ Enable understanding of system capacities and limitations

☐ Enable monitoring for anomalies, dysfunctions, and unexpected performance

☐ Enable correct interpretation of outputs

☐ Enable overriding or reversing outputs

☐ Enable intervention or stopping of operation

Deployer shall:

☐ Assign human oversight to competent, trained, and authorized persons

☐ Ensure oversight persons understand AI System and oversight tools

☐ Ensure oversight persons can intervene, override, or stop operation

☐ Implement oversight consistent with instructions for use

7.2 Colorado AI Act Compliance

If subject to Colorado AI Act:

☐ Impact assessment includes human oversight description

☐ Consumers informed of AI use in consequential decisions

☐ Appeal mechanism provides human review

☐ Documentation maintained as required

7.3 Other Regulatory Compliance

| Regulation | Requirement | Compliance Measure |

|---|---|---|

| [REGULATION 1] | [REQUIREMENT] | [MEASURE] |

| [REGULATION 2] | [REQUIREMENT] | [MEASURE] |

ARTICLE 8: REPRESENTATIONS AND WARRANTIES

8.1 Provider Representations

Provider represents and warrants:

☐ AI System supports human oversight as described

☐ Override capabilities function as documented

☐ System does not undermine meaningful human control

☐ Documentation accurately describes oversight features

8.2 Deployer Representations

Deployer represents and warrants:

☐ Will implement oversight as specified

☐ Will employ Qualified Personnel

☐ Will maintain required training

☐ Will not circumvent oversight requirements

ARTICLE 9: LIABILITY

9.1 Allocation of Liability

| Scenario | Primary Liability |

|---|---|

| AI System failure to support oversight | Provider |

| Deployer failure to implement oversight | Deployer |

| Qualified Personnel error after proper review | [ALLOCATION] |

| Failure due to inadequate training | [ALLOCATION] |

| Regulatory non-compliance | [ALLOCATION] |

9.2 Indemnification

[STANDARD INDEMNIFICATION PROVISIONS]

9.3 Limitation of Liability

[STANDARD LIMITATION OF LIABILITY PROVISIONS]

ARTICLE 10: TERM AND TERMINATION

10.1 Term

This Agreement commences on the Effective Date and continues for the duration of Deployer's use of the AI System.

10.2 Termination

Either party may terminate for material breach not cured within [DAYS].

10.3 Effects of Termination

Upon termination:

☐ Deployer ceases use of AI System per underlying agreement

☐ Record retention obligations survive

☐ Confidentiality obligations survive

ARTICLE 11: GENERAL PROVISIONS

11.1 Governing Law

This Agreement is governed by the laws of [JURISDICTION].

11.2 Amendments

Amendments must be in writing signed by both parties.

11.3 Entire Agreement

This Agreement constitutes the entire agreement regarding human oversight.

11.4 Severability

Invalid provisions shall be modified; remaining provisions continue.

SIGNATURES

PROVIDER:

Signature: _________________________________

Name: [NAME]

Title: [TITLE]

Date: _________________________________

DEPLOYER:

Signature: _________________________________

Name: [NAME]

Title: [TITLE]

Date: _________________________________

SCHEDULE A: AI SYSTEM DESCRIPTION

| Field | Description |

|---|---|

| System Name | [NAME] |

| Version | [VERSION] |

| Provider | [PROVIDER] |

| Purpose | [PURPOSE] |

| Decision Types | [TYPES] |

| Risk Classification | [CLASSIFICATION] |

SCHEDULE B: OVERSIGHT PROCEDURES

[DETAILED OVERSIGHT PROCEDURES]

SCHEDULE C: TRAINING REQUIREMENTS

[DETAILED TRAINING CURRICULUM]

This Human-in-the-Loop Requirements Agreement template is provided for informational purposes. Legal counsel review is recommended.

Do more with Ezel

This free template is just the beginning. See how Ezel helps legal teams draft, research, and collaborate faster.

AI that drafts while you watch

Tell the AI what you need and watch your document transform in real-time. No more copy-pasting between tools or manually formatting changes.

- Natural language commands: "Add a force majeure clause"

- Context-aware suggestions based on document type

- Real-time streaming shows edits as they happen

- Milestone tracking and version comparison

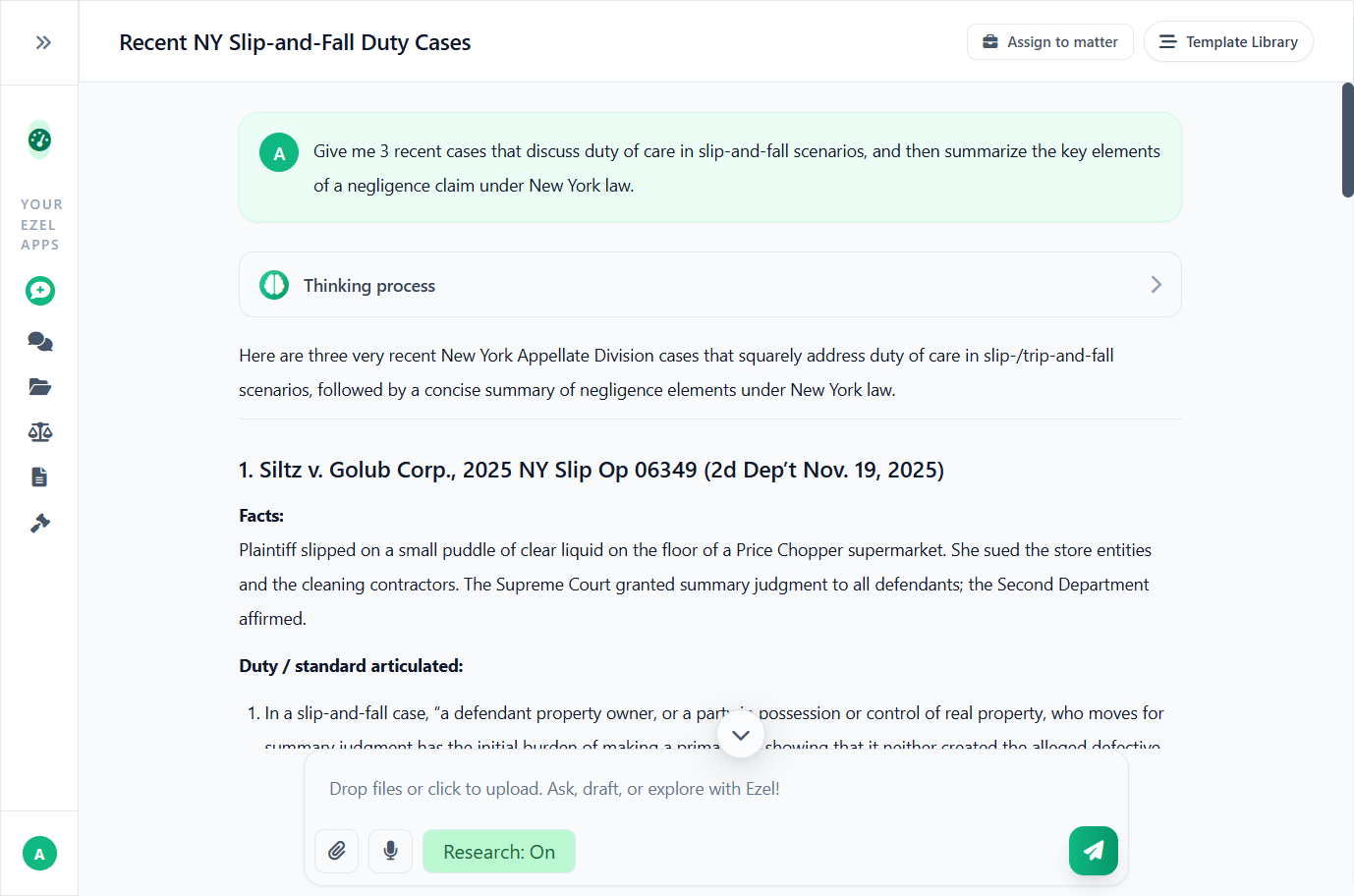

Research and draft in one conversation

Ask questions, attach documents, and get answers grounded in case law. Link chats to matters so the AI remembers your context.

- Pull statutes, case law, and secondary sources

- Attach and analyze contracts mid-conversation

- Link chats to matters for automatic context

- Your data never trains AI models

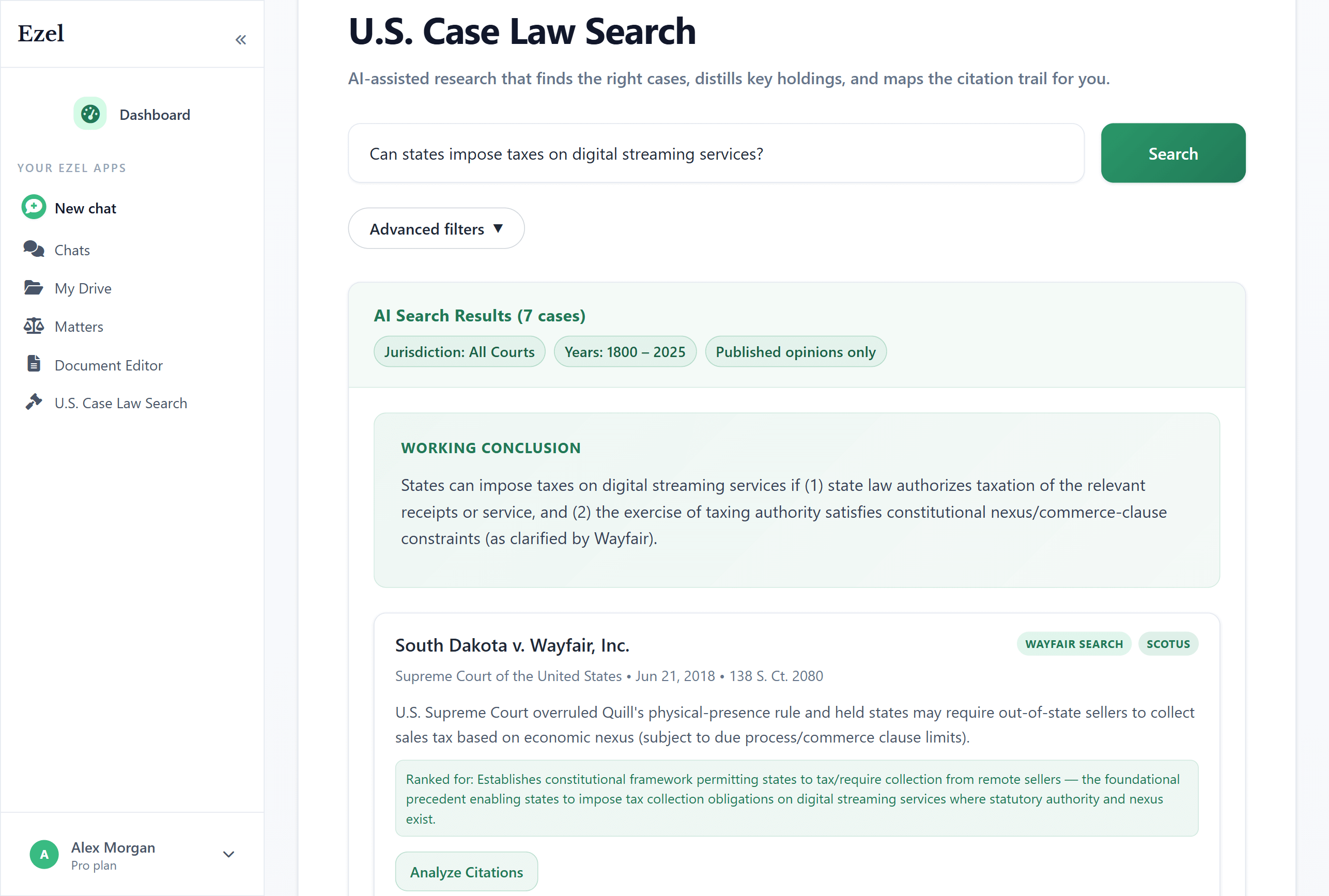

Search like you think

Describe your legal question in plain English. Filter by jurisdiction, date, and court level. Read full opinions without leaving Ezel.

- All 50 states plus federal courts

- Natural language queries - no boolean syntax

- Citation analysis and network exploration

- Copy quotes with automatic citation generation

Ready to transform your legal workflow?

Join legal teams using Ezel to draft documents, research case law, and organize matters — all in one workspace.